Generative AI is among the most essential traits within the historical past of private computing, bringing developments to gaming, creativity, video, productiveness, improvement and extra.

And GeForce RTX and NVIDIA RTX GPUs, that are full of devoted AI processors referred to as Tensor Cores, are bringing the facility of generative AI natively to greater than 100 million Home windows PCs and workstations.

In the present day, generative AI on PC is getting as much as 4x sooner through TensorRT-LLM for Home windows, an open-source library that accelerates inference efficiency for the most recent AI giant language fashions, like Llama 2 and Code Llama. This follows the announcement of TensorRT-LLM for knowledge facilities final month.

NVIDIA has additionally launched instruments to assist builders speed up their LLMs, together with scripts that optimize customized fashions with TensorRT-LLM, TensorRT-optimized open-source fashions and a developer reference venture that showcases each the pace and high quality of LLM responses.

TensorRT acceleration is now obtainable for Secure Diffusion within the in style Net UI by Automatic1111 distribution. It hurries up the generative AI diffusion mannequin by as much as 2x over the earlier quickest implementation.

Plus, RTX Video Tremendous Decision (VSR) model 1.5 is obtainable as a part of at the moment’s Sport Prepared Driver launch — and might be obtainable within the subsequent NVIDIA Studio Driver, releasing early subsequent month.

Supercharging LLMs With TensorRT

LLMs are fueling productiveness — partaking in chat, summarizing paperwork and internet content material, drafting emails and blogs — and are on the core of latest pipelines of AI and different software program that may mechanically analyze knowledge and generate an unlimited array of content material.

TensorRT-LLM, a library for accelerating LLM inference, offers builders and finish customers the advantage of LLMs that may now function as much as 4x sooner on RTX-powered Home windows PCs.

At larger batch sizes, this acceleration considerably improves the expertise for extra subtle LLM use — like writing and coding assistants that output a number of, distinctive auto-complete outcomes without delay. The result’s accelerated efficiency and improved high quality that lets customers choose one of the best of the bunch.

TensorRT-LLM acceleration can be useful when integrating LLM capabilities with different know-how, reminiscent of in retrieval-augmented era (RAG), the place an LLM is paired with a vector library or vector database. RAG permits the LLM to ship responses primarily based on a selected dataset, like consumer emails or articles on an internet site, to offer extra focused solutions.

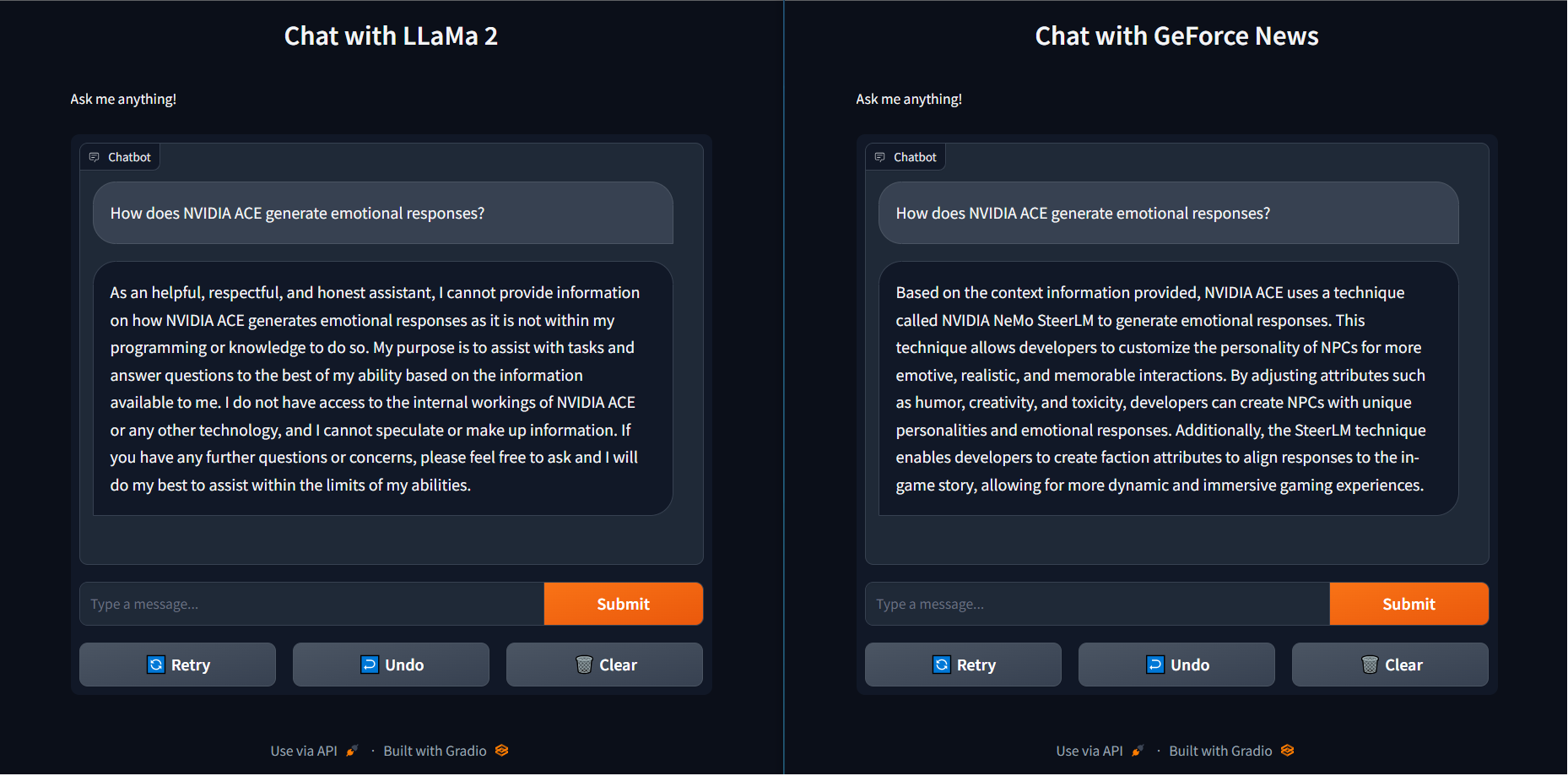

To indicate this in sensible phrases, when the query “How does NVIDIA ACE generate emotional responses?” was requested of the LLaMa 2 base mannequin, it returned an unhelpful response.

Conversely, utilizing RAG with latest GeForce information articles loaded right into a vector library and related to the identical Llama 2 mannequin not solely returned the right reply — utilizing NeMo SteerLM — however did a lot faster with TensorRT-LLM acceleration. This mix of pace and proficiency offers customers smarter options.

TensorRT-LLM will quickly be obtainable to obtain from the NVIDIA Developer web site. TensorRT-optimized open supply fashions and the RAG demo with GeForce information as a pattern venture can be found at ngc.nvidia.com and GitHub.com/NVIDIA.

Computerized Acceleration

Diffusion fashions, like Secure Diffusion, are used to think about and create gorgeous, novel artistic endeavors. Picture era is an iterative course of that may take tons of of cycles to realize the right output. When performed on an underpowered laptop, this iteration can add as much as hours of wait time.

TensorRT is designed to speed up AI fashions by means of layer fusion, precision calibration, kernel auto-tuning and different capabilities that considerably enhance inference effectivity and pace. This makes it indispensable for real-time functions and resource-intensive duties.

And now, TensorRT doubles the pace of Secure Diffusion.

Appropriate with the most well-liked distribution, WebUI from Automatic1111, Secure Diffusion with TensorRT acceleration helps customers iterate sooner and spend much less time ready on the pc, delivering a last picture sooner. On a GeForce RTX 4090, it runs 7x sooner than the highest implementation on Macs with an Apple M2 Extremely. The extension is obtainable for obtain at the moment.

The TensorRT demo of a Secure Diffusion pipeline supplies builders with a reference implementation on the way to put together diffusion fashions and speed up them utilizing TensorRT. That is the place to begin for builders serious about turbocharging a diffusion pipeline and bringing lightning-fast inferencing to functions.

Video That’s Tremendous

AI is bettering on a regular basis PC experiences for all customers. Streaming video — from practically any supply, like YouTube, Twitch, Prime Video, Disney+ and numerous others — is among the many hottest actions on a PC. Because of AI and RTX, it’s getting one other replace in picture high quality.

RTX VSR is a breakthrough in AI pixel processing that improves the standard of streamed video content material by decreasing or eliminating artifacts attributable to video compression. It additionally sharpens edges and particulars.

Accessible now, RTX VSR model 1.5 additional improves visible high quality with up to date fashions, de-artifacts content material performed in its native decision and provides assist for RTX GPUs primarily based on the NVIDIA Turing structure — each skilled RTX and GeForce RTX 20 Sequence GPUs.

Retraining the VSR AI mannequin helped it be taught to precisely determine the distinction between delicate particulars and compression artifacts. In consequence, AI-enhanced photos extra precisely protect particulars throughout the upscaling course of. Finer particulars are extra seen, and the general picture seems sharper and crisper.

New with model 1.5 is the flexibility to de-artifact video performed on the show’s native decision. The unique launch solely enhanced video when it was being upscaled. Now, for instance, 1080p video streamed to a 1080p decision show will look smoother as heavy artifacts are decreased.

RTX VSR 1.5 is obtainable at the moment for all RTX customers within the newest Sport Prepared Driver. It will likely be obtainable within the upcoming NVIDIA Studio Driver, scheduled for early subsequent month.

RTX VSR is among the many NVIDIA software program, instruments, libraries and SDKs — like these talked about above, plus DLSS, Omniverse, AI Workbench and others — which have helped carry over 400 AI-enabled apps and video games to customers.

The AI period is upon us. And RTX is supercharging at each step in its evolution.