Samsung’s newest reminiscence expertise has hit staggering speeds of 9.8Gb/s – or 1.2TB/s – that means it’s greater than 50% quicker than its predecessor.

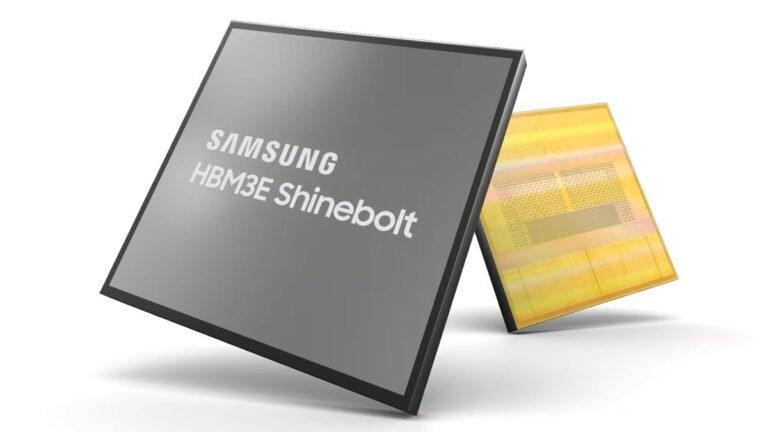

The HBM3E reminiscence customary, nicknamed Shinebolt, is the newest in a sequence of high-performance reminiscence items Samsung has developed for the age of cloud computing and elevated demand for assets.

Shinebolt is a successor to Icebolt, which is available in varieties as much as 32GB, can attain speeds of as much as 6.4Gb/s. These chips have been designed particularly for use with the greatest GPUs on the market in AI processing and LLMs, with the agency ramping up manufacturing this 12 months because the rising business gathers momentum.

Powering the subsequent technology of AI chips

HBM3E will inevitably discover its means into the parts developed by the likes of Nvidia, with each suggestion it could discover its means into the GH200, nicknamed Grace Hopper, in gentle of a latest deal it struck.

Excessive-bandwidth reminiscence (HBM) is far quicker and extra power environment friendly than standard RAM, and makes use of 3D stacking expertise which lets the layers of chips to be stacked on high of one another.

Samsung’s HBM3E stacks layers larger than in earlier iterations by way of the usage of non-conductive movie (NCF) expertise, which eliminates gaps between the layers within the chips. Thermal conductivity is maximized, and it could finally hit a lot larger speeds and effectivity because of this.

The unit will energy the subsequent technology of AI purposes, Samsung claims, because it’ll pace up AI coaching and inference in knowledge facilities and enhance the entire value of possession (TCO).

Much more thrilling is the prospect that it’ll be included in Nvidia’s next-gen AI chip, the H200. The 2 firms struck an settlement in September during which Samsung would provide the chipmaker with HBM3 reminiscence items, in response to the Korea Financial Day by daywith Samsung set to produce roughly 30% of Nvidia’s reminiscence by 2024.

Ought to this partnership proceed, there’s each risk HBM3E parts will develop into a part of this deal as soon as they enter mass manufacturing.