Between integrating its Grace Hopper chip straight with a quantum processor and exhibiting off the flexibility to simulate quantum methods on classical supercomputers, Nvidia is making waves within the quantum computing world this month.

Nvidia is definitely well-positioned to reap the benefits of the latter. It makes GPUs that supercomputers use, the identical GPUs that AI builders crave. These similar GPUs are additionally useful as instruments for simulating dozens of qubits on classical computer systems. New software program developments imply that researchers can now use increasingly more supercomputing assets in lieu of actual quantum computer systems.

However simulating quantum methods is a uniquely demanding problem, and people calls for loom within the background.

Few quantum pc simulations so far have been in a position to entry a couple of multi-GPU node and even only a single GPU. However Nvidia has made current behind-the-scenes advances, now making it potential to ease these bottlenecks.

Classical computer systems serve two roles in simulating quantum {hardware}. For one, quantum-computer-builders can use classical computation to test-run their designs. “Classical simulation is a basic facet of understanding and design of quantum {hardware}, often serving as the one means to validate these quantum methods,” says Jinzhao Solar, a postdoctoral researcher at Imperial Faculty London.

For an additional, classical computer systems can run quantum algorithms in lieu of an precise quantum pc. It’s this functionality that particularly pursuits researchers who work on purposes like molecular dynamics, protein folding, and the burgeoning subject of quantum machine studying, all of which profit from quantum processing.

Classical simulations should not good replacements for the real quantum articles, however they often make appropriate facsimiles. The world solely has so many quantum computer systems, and classical simulations are simpler to entry. Classical simulations may also management the noise that plagues actual quantum processors and sometimes scuttles quantum runs. Classical simulations could also be slower than genuine quantum ones, however researchers nonetheless save time from needing fewer runs, in line with Shinjae Yoo, a pc science and machine studying workers researcher at Brookhaven Nationwide Laboratory.

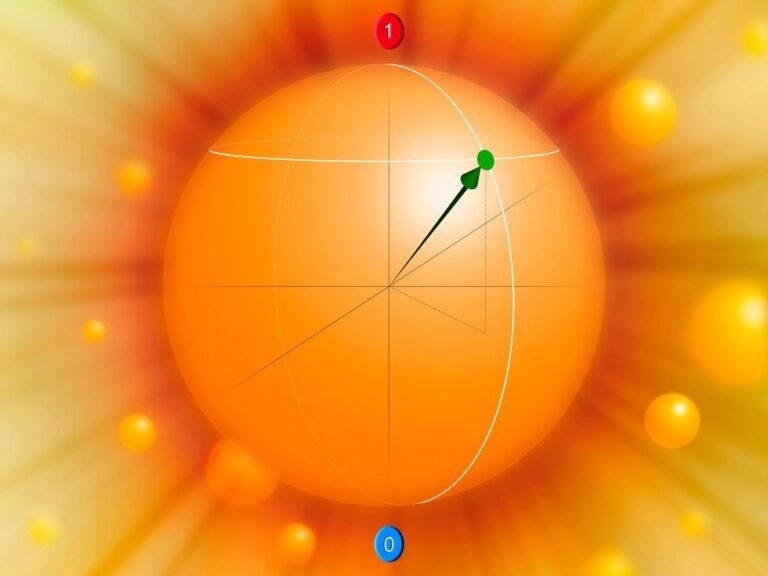

The catch, then, is a dimension downside. As a result of a qubit in a quantum system is entangled with each different qubit in that system, the calls for of precisely simulating that system scale exponentially. As a rule of thumb, each further qubit doubles the quantity of classical reminiscence the simulation wants. Transferring from a single GPU to a whole eight-GPU node is a rise of three qubits.

Many researchers nonetheless dream of urgent as far up this exponential slope as they will handle. “If we’re doing, let’s say, molecular dynamics simulation, we wish a a lot greater variety of atoms and a bigger-scale simulation to have a extra practical simulation,” Yoo says.

GPUs are key footholds. Swapping in a GPU for a CPU, Yoo says, can velocity up a simulation of a quantum system by an order of magnitude. That form of acceleration might not come as a shock, however few simulations have been in a position to take full benefit due to bottlenecks in sending data between GPUs. Consequently, most simulations have stayed throughout the confines of 1 multi-GPU node or perhaps a single GPU inside that node.

A number of behind-the-scenes advances at the moment are making it potential to ease these bottlenecks. Nearer to the floor, Nvidia’s cuQuantum software program improvement equipment makes it simpler for researchers to run quantum simulations throughout a number of GPUs. The place GPUs beforehand wanted to speak by way of CPU—creating an extra bottleneck—collective communications frameworks like Nvidia’s NCCL let customers conduct operations like memory-to-memory copy straight between nodes.

cuQuantum pairs with quantum computing toolkits comparable to Canadian startup Xanadu’s PennyLane. A stalwart within the quantum machine studying group, PennyLane lets researchers play with methods like PyTorch on quantum computer systems. Whereas PennyLane is designed to be used on actual quantum {hardware}, PennyLane’s builders particularly added the potential to run on a number of GPU nodes.

On paper, these advances can enable classical computer systems to simulate round 36 qubits. In observe, simulations of that dimension demand too many node hours to be sensible. A extra practical gold customary at this time is within the higher 20s. Nonetheless, that’s an extra ten qubits over what researchers may simulate only a few years in the past.

GPUs are key footholds. Swapping in a GPU for a CPU, Yoo says, can velocity up a simulation of a quantum system by an order of magnitude.

To wit, Yoo performs his work on the Perlmutter supercomputer, which is constructed from a number of thousand Nvidia A100 GPUs—sought for his or her prowess in coaching and working AI fashions, even in China, the place their sale is restricted by U.S. authorities export controls. Fairly a couple of different supercomputers within the West use A100s as their backbones.

Can classical {hardware} proceed to develop in dimension? The problem is immense. The leap from an Nvidia DGX with 160 GB of GPU reminiscence to 1 with 320 GB of GPU reminiscence is a leap of only one qubit. Jinzhao Solar believes that classical simulations trying to simulate greater than 100 qubits will probably fail.

Actual quantum {hardware}, at the least on the floor, has already lengthy outstripped these qubit numbers. IBM, as an example, has steadily ramped up the variety of qubits in its personal general-purpose quantum processors into the lots of, with formidable plans to push these counts into the hundreds.

That doesn’t imply simulation gained’t play an element in a thousand-qubit future. Classical computer systems can play vital roles in simulating elements of bigger methods—validating their {hardware} or testing algorithms that may at some point run in full dimension. It seems that there’s quite a bit you are able to do with 29 qubits.

From Your Website Articles

Associated Articles Across the Internet